Postman tests and how to include them in Bamboo build and deployment

Writing unit tests that ensure individual sections of an application or service are running correctly is a process deeply ingrained in most developers’ workflow. Another form of testing that is arguably more difficult to include in your projects is integration testing. Integration tests allow you to ensure all of the different units of code that make up your application are able to work together cohesively without any trouble. This usually involves testing a deployed version of your code and because of this, integration tests are generally perceived to be time-consuming and difficult to create. A very useful tool that can help you create integration tests is Postman.

Postman provides developers with a set of tools that support every stage of the API lifecycle. The most frequent use case for a developer consists of debugging an API which was recently changed or broken by sending a request to the endpoint in question. While this example certainly serves a purpose to a development team, it's merely a small snippet of the full benefits that can be realised by using Postman. Another very powerful but underused feature in Postman's set of tools is the ability to create and automatically run a collection of tests.

Postman Tests

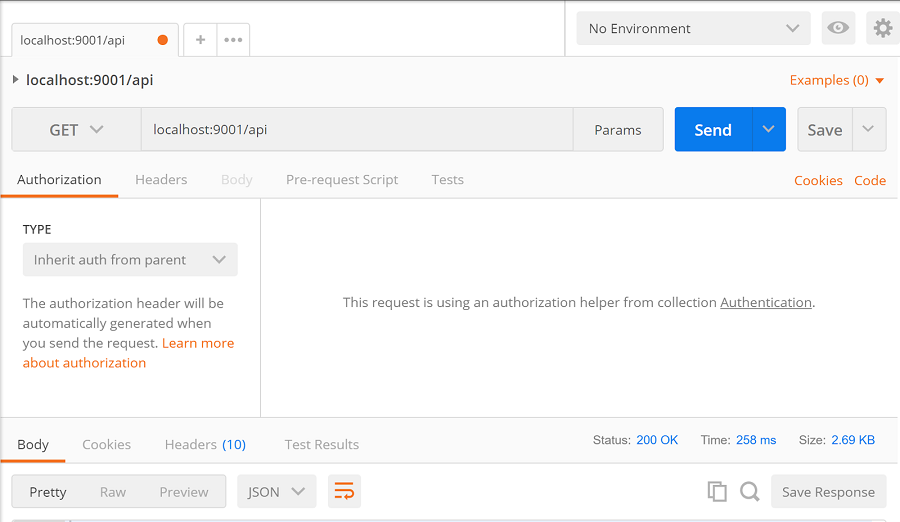

A typical request to a locally hosted backend using the Postman desktop application may look something like this:

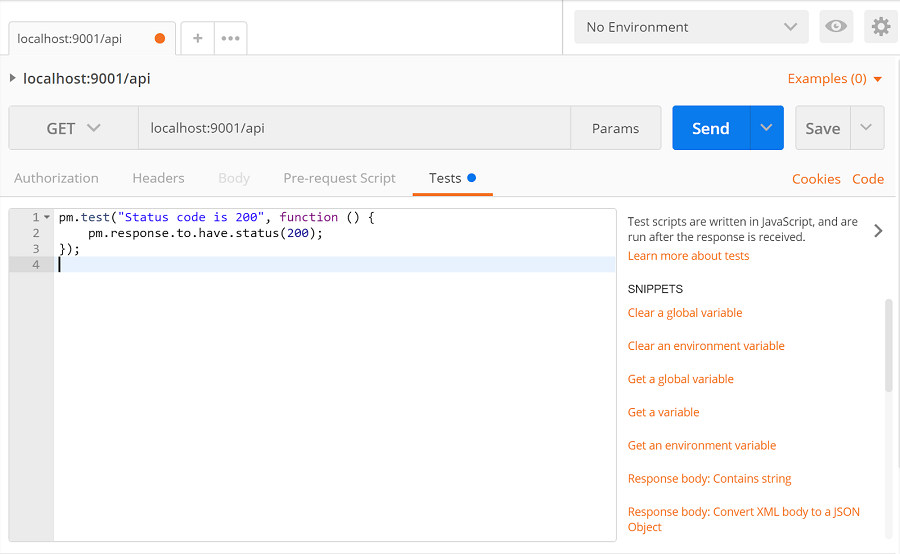

This request is not complex at all, in fact, it's about as basic as it gets but it accomplishes its job of communicating with the locally-hosted backend and returning a response. With Postman, we can create elegant tests for our endpoints using a few lines of code. Let’s say for example we want to create a test for the above-specified endpoint and ensure that all requests made to it return a 200 HTTP status code. All we need to do is enter the “tests” tab of the Postman request and start writing out the test.

On the right-hand side of the test tab is a list of links which generate code to make testing through Postman a bit easier. The Postman testing suite comes with a set of useful features such as setting up environment variables and global variables to use in subsequent request bodies, converting response content type and setting up response object definitions to be automatically validated. More information about the syntax of Postman tests and how to create them can be found here.

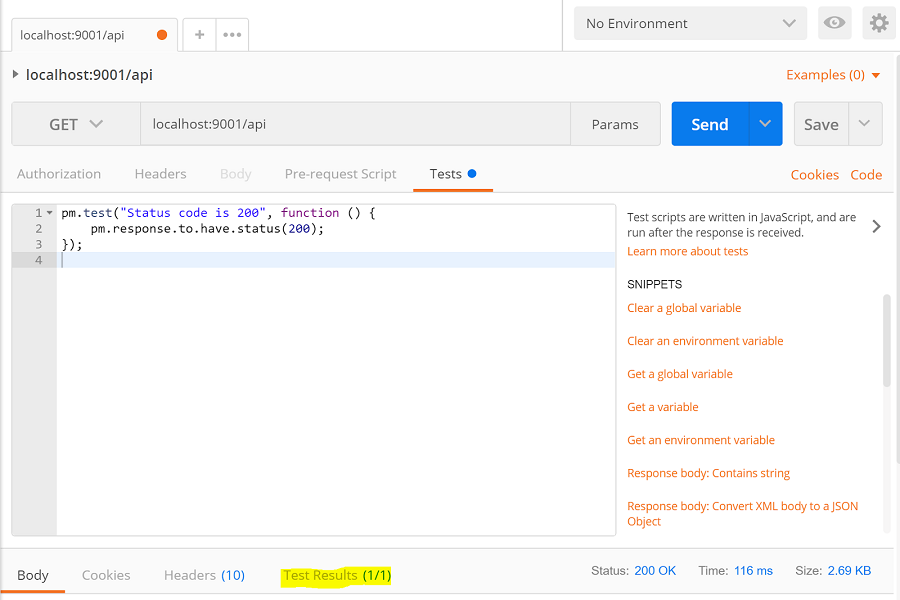

Continuing with this test, we resend the request and ensure that our test is passing.

Now that we are happy with our newly created test we can save our request along with its test into a Postman collection. Collections allow you to organise requests together into logical groupings. These collections can then be shared with other developers by using shareable links or by exporting the collection into a JSON file which can then be shared in any manner. We like to keep test collections in source control so that each developer has a central location to pull the tests from. We also use shareable links to share collections with our product and testing team members. By doing this we are able to provide non-engineering staff with the ability to quickly test our development environment endpoints without requiring any form of checking out code or bulky servers running locally.

Postman environments

Before moving on to creating numerous requests and tests, it might be useful to understand how environments in Postman work. Essentially they allow you to store a set of key or value pairs which can be utilised in requests and tests. Read more about them here.

One of the important points to take note of is environments can also be shared in the same way as collections can.

Running a Postman collection locally

With all of that in mind, we can now repeat the process of creating requests and tests for all of our server’s endpoints. It is advised to create tests that cover a large range of requirements, for example, create a test that ensures that one of the endpoints should return a body with certain data fields. Once the set of requests is filled with excellent tests saved, configure Postman to run these tests all together as a collection.

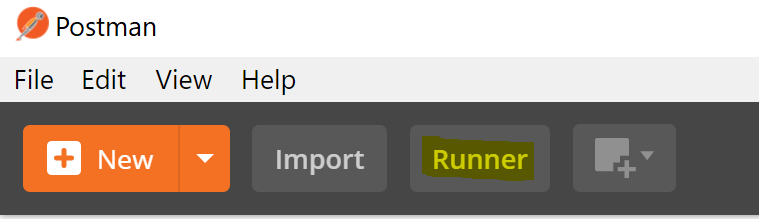

To run a collection, locate the test “runner” which can be found in the upper left corner.

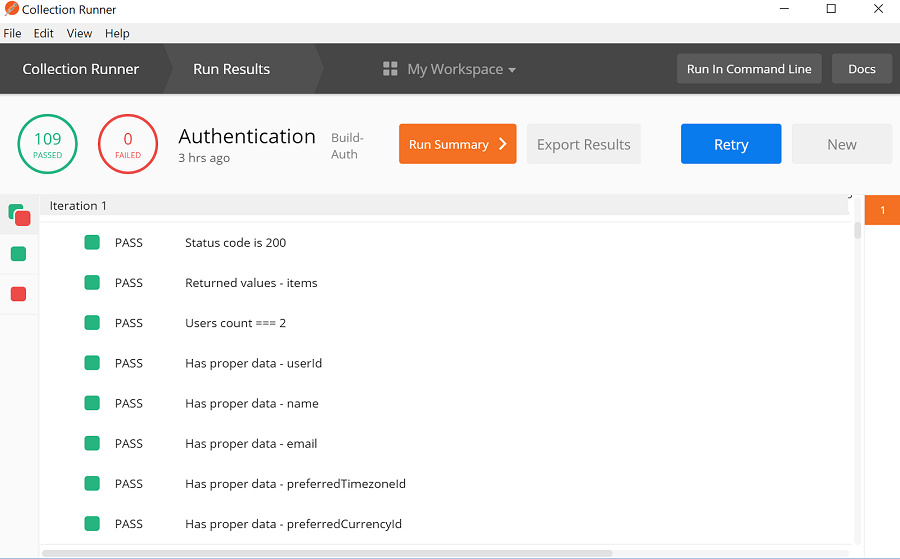

From here select the collection and any environment created for that collection. Next, run the collection and if everything goes well a green circle will indicate the number of tests that have passed. Further down the window displays detailed information about individual tests and requests such as the duration of each request and which assert statements succeeded.

Integrating these collections into Bamboo build/deployment

With this amazing set of tests for these endpoints, wouldn’t it be nice to be able to integrate it into the Bamboo build and deployment process? Specifically, it would be pretty cool to run a Postman collection against a deployment to ensure that the code changes work in the real world. Well, there's some bad news and some good news. Unfortunately, Postman integration tests are not supported in Bamboo natively, however, where there’s a will, there’s a way. This “way” isn’t exactly the most elegant form of performing testing in Bamboo but it achieves the goal:

To be able to run a collection of Postman tests against a deployment of one of our backend services.

The team over at Postman developed and released a very useful npm package named Newman. Newman allows users to invoke Postman test collections through a CLI interface and because it is developed in node.js it can easily be invoked during a build or deploy plan inside of Bamboo. However, Bamboo doesn’t directly support integration tests for deployments. The collection can be run right after deployment using a script to invoke Newman but there is no direct way of parsing the results and linking them to that deployment. To overcome this hurdle, we need to get our hands a little dirty.

The solution that we implemented involved executing our Postman collection during the deployment plan, uploading the test results to an Amazon S3 bucket, invoking a completely separate build plan through an API call and finally pulling and parsing those test results into that build plan. It certainly wasn’t what we had in mind when we first thought about integrating Postman with Bamboo and there are some limitations to the design, but it does the job.

Even though it is possible to trigger another build plan from a successful deployment using Bamboo triggers, we opted not to follow this approach. We wanted to ensure that every successful deployment would have our Postman collection tests run and correctly parsed. To do that we told our second build plan where to find the test results that it should parse. If we tried to use triggers to do the same job we would have run into the problem of not being able to tell the build where to find the test results. We could have specified a static location where we would write the latest test results but there were some edge-case scenarios with this approach that could have lead to some test results not being parsed. Having said that, using triggers to invoke the build plan instead of an API call is completely valid strategy and I’m sure there are solutions out there to the aforementioned problems.

Setting up a Postman collection to run after deployment

Before we could begin the process of setting up our collection to run after our service had deployed, we needed to ensure that our deployment plan had access to the collection and environments we wanted to use. One way to achieve this is to store the collection and environments in a source control system. If you do this, create a task at the beginning of the deployment to pull the collection and environments from the remote repository. In our case, we decided to keep things simple and store our collections alongside the code that our collection tests interact with. This meant that we already had access to our collection tests in the build plan which our deployment plan was preceded by.

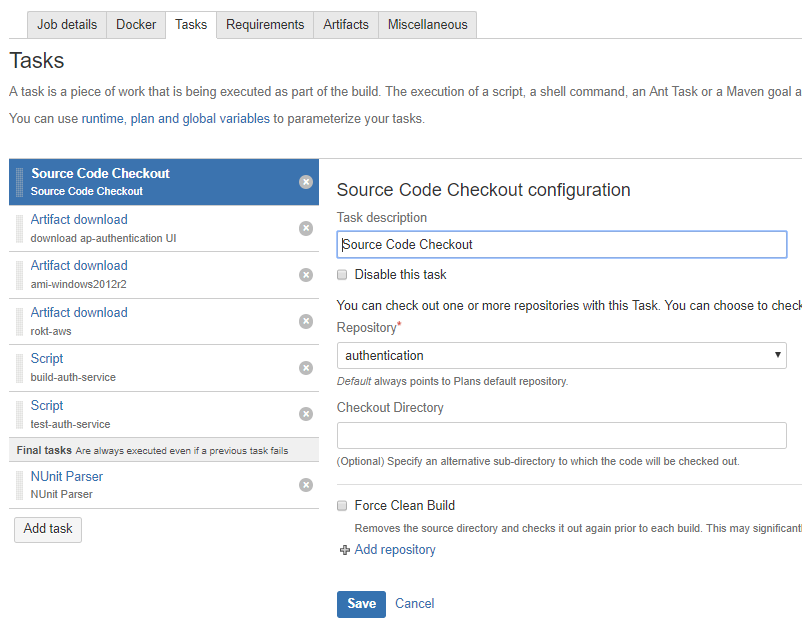

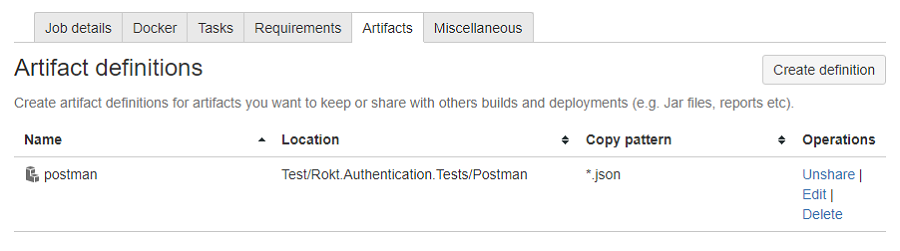

In the build plan, we pulled the repository of our service, ran our unit tests, and created our artifacts. One of the artifacts we created from our build consisted of the Postman collections and environments we wanted to test after the deployment.

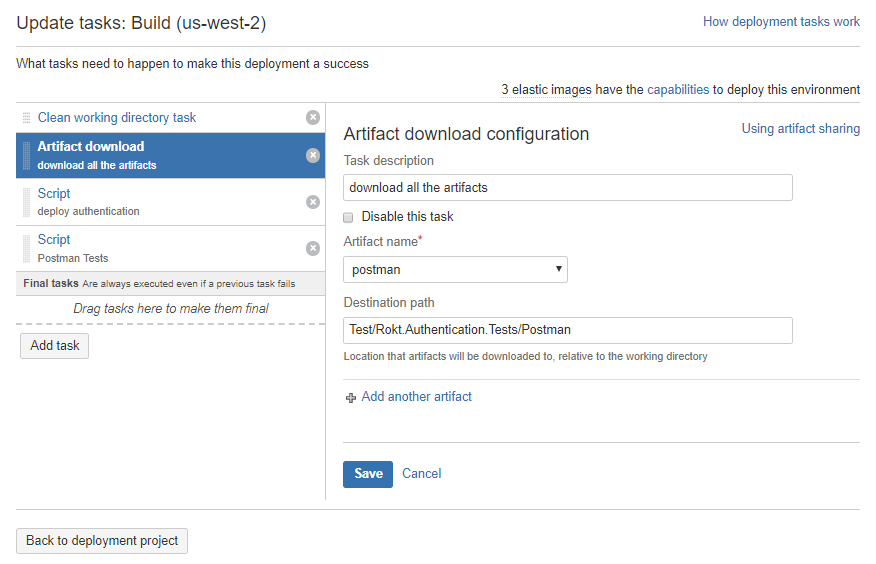

In the deployment plan, we added a task to download this artifact from the preceding build.

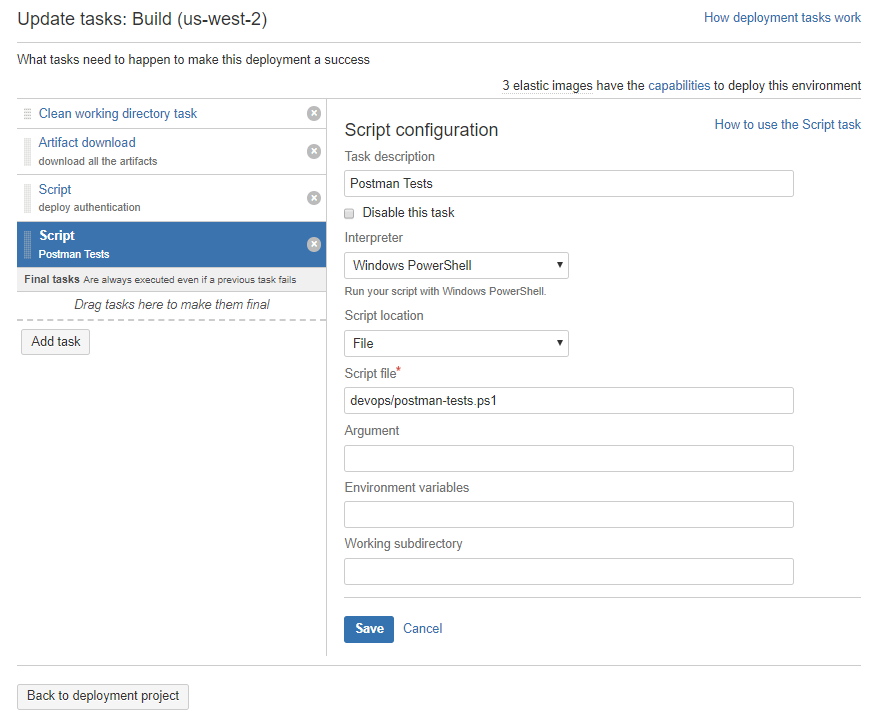

With access to the collection, we started to work on configuring the execution of the collection and tests. In order to run the collection against recently deployed endpoints, we created an additional task at the end of the deployment. The additional task invoked a script which used Newman to execute the Postman tests. We decided to include this script in the build artifact containing our Postman collection to make it easier to access.

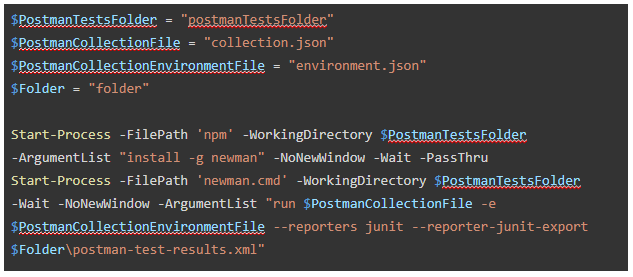

How you configure your script is up to you but if you want to follow the approach we used you’ll need to include a few sections of code. First, install Newman and tell it to execute your Postman collection.

When you run Newman, you can specify the location you want the result of your collection tests to be stored at. In the code snippet above we use the $Folder variable.

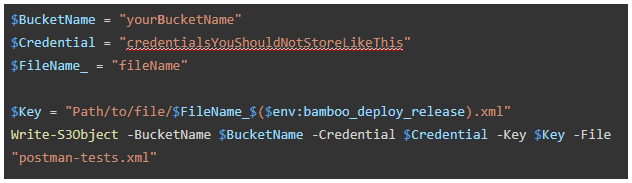

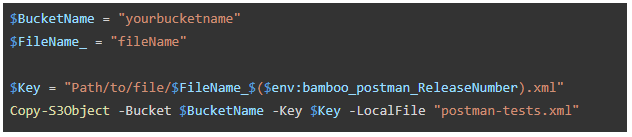

Then to upload the test results, make use of the AWS CLI. To ensure every test result file is uniquely identifiable, we opted to append the bamboo_deploy_release variable to the end of each test result file name.

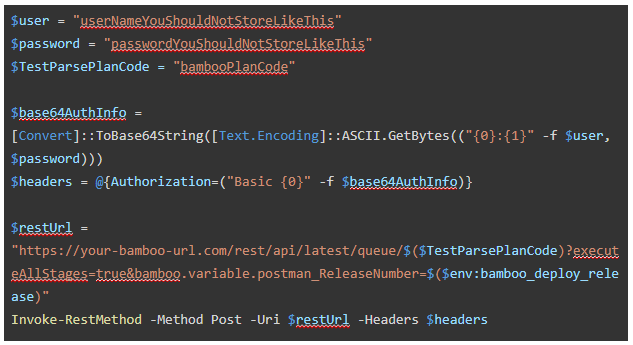

From here, invoke the build plan that will parse the test results. We passed along the same bamboo_deploy_release variable in order to be able to identify and download the right set of test results.

Parsing and reporting the results of collection tests

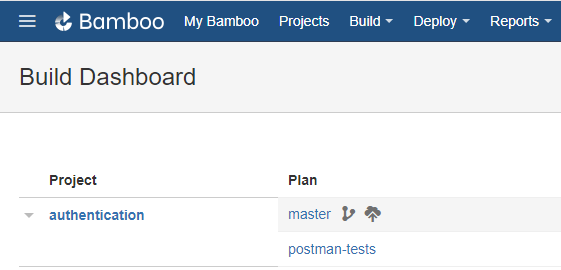

After all of that is set up, create the build plan and configure it to parse the collection test results. If you are familiar with Bamboo this will be quite easy to achieve. First, we need to create the plan.

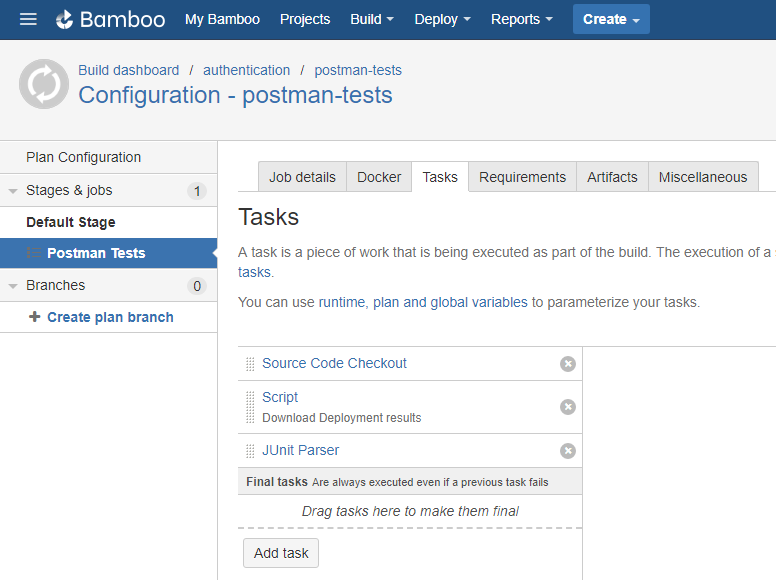

Then we need to configure the plan and add some tasks to it.

We checked one of the repositories which contained a shell script we created to download the collection test results from S3.

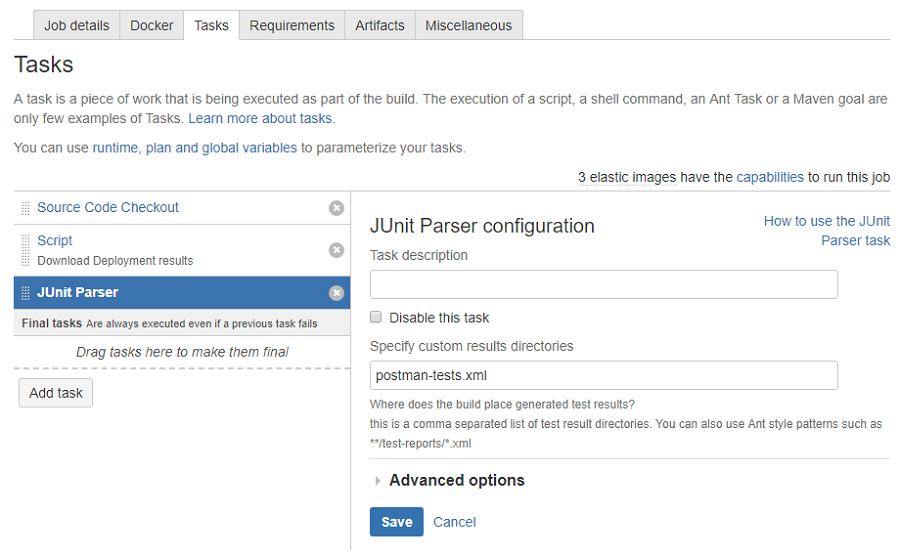

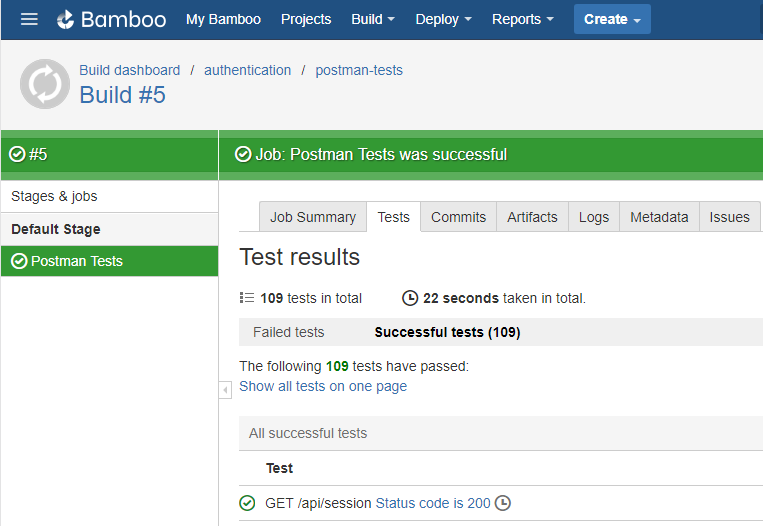

Finally we used JUnit to parse the test results.

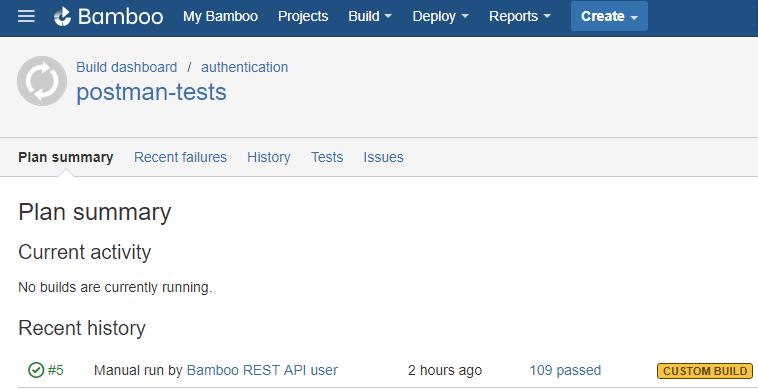

If you’ve set everything up correctly whenever the deployment occurs, your Postman collection should be executed and the results should be reported by the build plan created above.

A summary of the events occurring

Code change on branch -> Bamboo build begins via trigger -> build passes, Postman collection artifacts created -> Bamboo deployment begins via trigger -> Bamboo deployment grabs artifacts from Bamboo build -> Bamboo deployment deploys your service -> Bamboo deployment runs your Postman PowerShell script -> Postman collection is run -> results are uploaded to S3 -> separate Bamboo build is triggered by an API call -> separate Bamboo build runs download script and parses collection test results

Problems and things we could have done better

Due to the nature of the implementation, there was a small mismatch between the deployment of service and the Postman collections that ran against it. As Bamboo doesn’t natively support integration tests, we ended up parsing the test results in a separate build plan. This was problematic as it created a divide between the deployment and the tests. If some of the Postman tests fail, the deployment would still be marked as a success — as far as it is concerned, it carried out all of its tasks successfully. This can make the process of tracking down the root cause of test failures harder than it should be.

Despite its issues, the solution we implemented still proved to be beneficial, with a prime example being the increase of service status awareness. If our Postman collection tests fail for some reason, we can configure alerts which notify the assigned development team. If this situation does occur, the Postman collection test results help developers diagnose and understand what problems are present. By viewing the collection tests results in Bamboo, a developer can easily see which tests have failed and which have passed. Without integration tests like these, problems and bugs become increasingly difficult to identify and resolve.